Nvidia (NASDAQ:NVDA | NVDA Price Prediction) led the first wave of AI enthusiasm with its powerful GPUs. Investors piled in as data centers raced to secure chips that could train and run ever-larger models. Yet as AI expands, the opportunity spreads beyond GPUs into unexpected corners of the stack.

Memory — long viewed as a cyclical commodity prone to booms and busts — has become a high-margin bottleneck, and Micron Technology (NYSE:MU) stands out as one of the clearest beneficiaries. Here’s what changed.

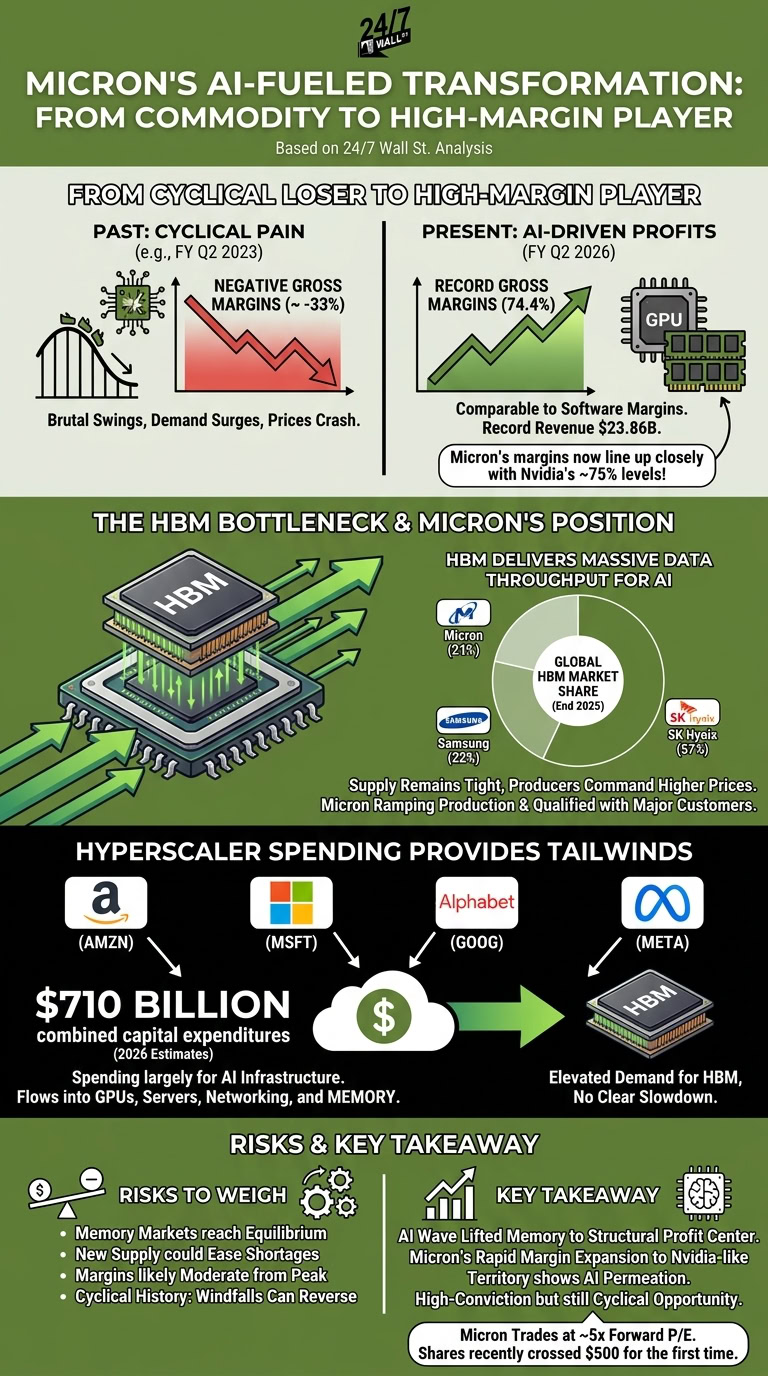

From Cyclical Loser to High-Margin Player

Memory makers have historically endured brutal swings. Demand surges, supply catches up, prices crash, and profits evaporate. Micron felt this pain sharply in fiscal Q2 2023, when it reported negative gross margins amid industry oversupply.

Fast-forward to fiscal Q2 2026. Micron posted gross margins of 74.4% on record revenue of $23.86 billion — nearly triple the year-ago quarter. That figure lines up closely with Nvidia’s recent levels, which hovered at 75% at the end of fiscal Q4 2026.

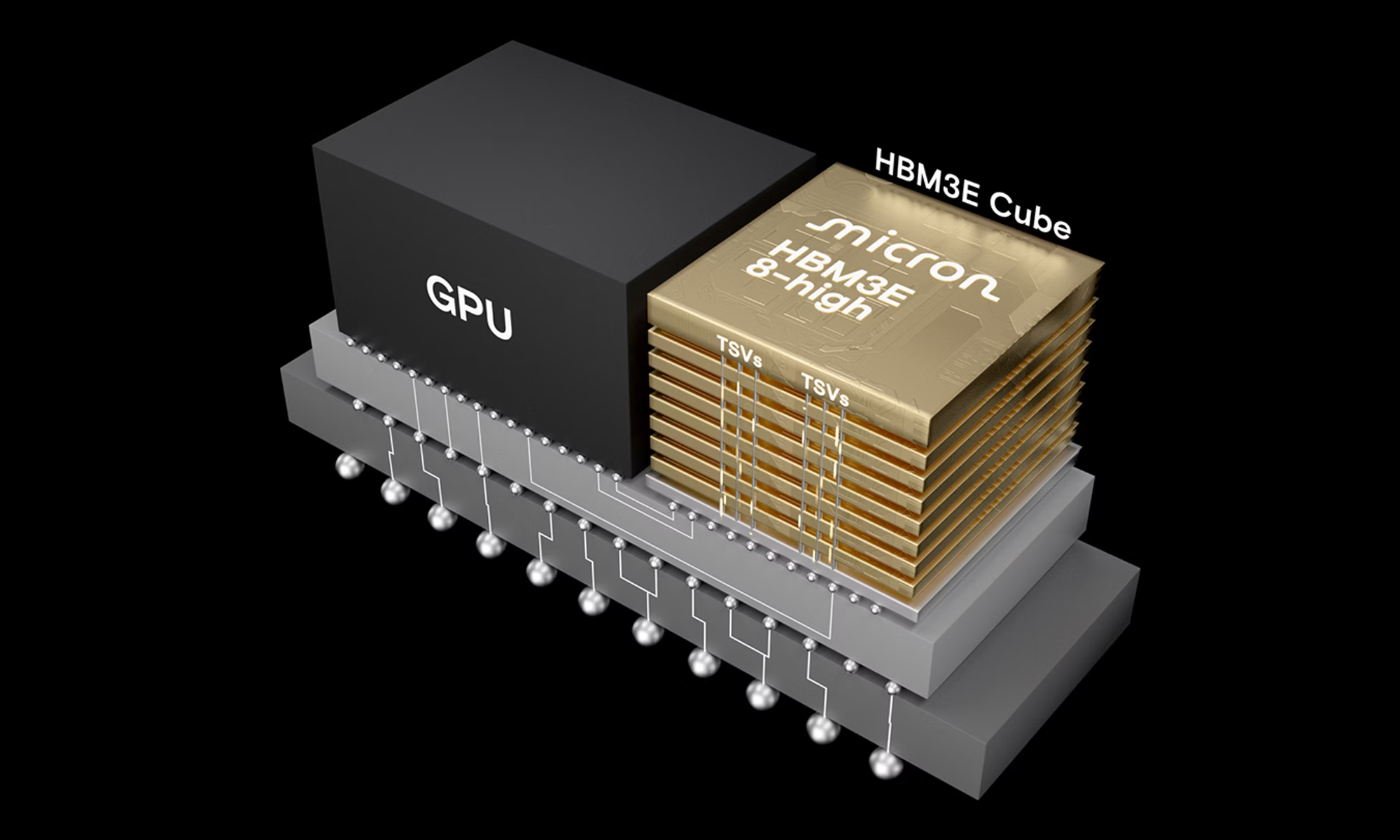

Surprisingly, these are margins more typical of software businesses with near-zero variable costs than traditional hardware. AI workloads demand high-bandwidth memory (HBM) that delivers massive data throughput at lightning speed. Standard DRAM no longer suffices for the most advanced training and inference tasks.

Here’s what the numbers tell us: Micron’s gross margin swung from roughly -33% three years ago to 75% today. That reversal reflects both pricing power and a rich product mix tilted toward premium HBM.

The HBM Bottleneck and Micron’s Position

Only three companies produce HBM at scale globally: Samsung, SK Hynix, and Micron. At the end 2025 — the most current data available — SK Hynix led with about 57% share, with Samsung and Micron virtually tied at 22% and 21%, respectively.

AI systems cannot substitute away from HBM easily. It stacks directly with GPUs to feed data fast enough for massive parallel processing. Supply remains tight relative to exploding demand, which lets producers command higher prices.

Micron has ramped production and qualified its HBM with major customers. This positions it to capture a growing slice of the market as hyperscalers build out infrastructure.

Hyperscaler Spending Provides Tailwinds

The Big 4 hyperscalers — Amazon (NASDAQ:AMZN), Microsoft (NASDAQ:MSFT), Alphabet (NASDAQ:GOOG), and Meta Platforms (NASDAQ:META) — plan roughly $710 billion in combined capital expenditures this year, largely for AI infrastructure.

That spending flows into GPUs, servers, networking — and the memory that ties it all together. With no clear slowdown in sight, demand for HBM should remain elevated.

Risks Investors Should Weigh

That said, memory markets eventually reach equilibrium. New supply from the three major players plus any successful efforts by others could ease shortages over time. Margins will likely moderate from peak levels as that happens.

Micron trades at an unbelievable forward P/E of around just 5x with shares recently crossing above $500 for the first time ever. That valuation embeds yesterday’s AI expectations but has yet to catch up with the shift currently underway towards agentic AI systems. While there is less room for disappointment if AI capex moderates or competition intensifies — and cyclical history reminds us that today’s windfalls can reverse — in the current environment, Micron stands to be rerated much higher.

Key Takeaway

In short, the AI wave has lifted memory from commodity status to a structural profit center. Micron’s rapid margin expansion to Nvidia-like territory shows how deeply the technology permeates the stack.

Smart investors see Micron as more than a cyclical play today. Its HBM exposure and pricing power offer participation in AI’s infrastructure buildout beyond pure GPU leaders that shows no sign of abating. Treat it as a high-conviction but still cyclical opportunity. The transformation looks real — just don’t ignore the eventual pull of gravity that hits all memory supercycles.