The AI trade has been one of the most powerful market narratives in decades, but it’s also been one of the most narrowly defined. For the past two years, investors have largely treated AI as a GPU story — and more specifically, an Nvidia (NASDAQ:NVDA | NVDA Price Prediction) story. That framing helped shape valuations, capital flows, and expectations across the entire semiconductor sector.

But markets don’t stay still. They rarely do. The next phase of AI isn’t just about training massive models or running inference at scale. It’s moving toward agentic AI systems — persistent, task-driven, multi-step workflows that demand far more than raw GPU throughput.

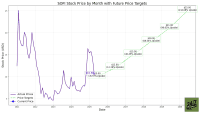

So here’s the question investors are now quietly circling: if AI is evolving, why is Micron Technology (NASDAQ:MU) still being priced like nothing has changed?

GPUs Defined AI — But May Not Define Its Next Era

Let’s start with what got us here.

The modern AI boom was effectively launched by Nvidia. Its GPUs became the backbone of both:

- Training large language models (LLMs)

- Inference at scale in production environments

Nvidia’s data center revenue surged from roughly $3.6 billion in Q4 2023 to $62.3 billion in Q4 2025 — a near-160% compound annual growth rate — driven almost entirely by AI compute demand.

That explosive growth created a simple investor mental model:

AI = GPUs = Nvidia

That’s a fancy way of saying the market equated AI progress with GPU demand. And for a while, that model worked perfectly. But here’s the problem with dominant narratives: they tend to oversimplify what is actually a layered computing stack.

The Shift to Agentic AI Changes the Compute Stack

AI is now transitioning from static model execution to agentic systems — software that can plan, execute, and refine multi-step tasks autonomously.

That shift changes the hardware mix in three important ways:

- GPUs still matter for heavy computation and model inference

- CPUs become central for orchestration, logic, and memory coordination

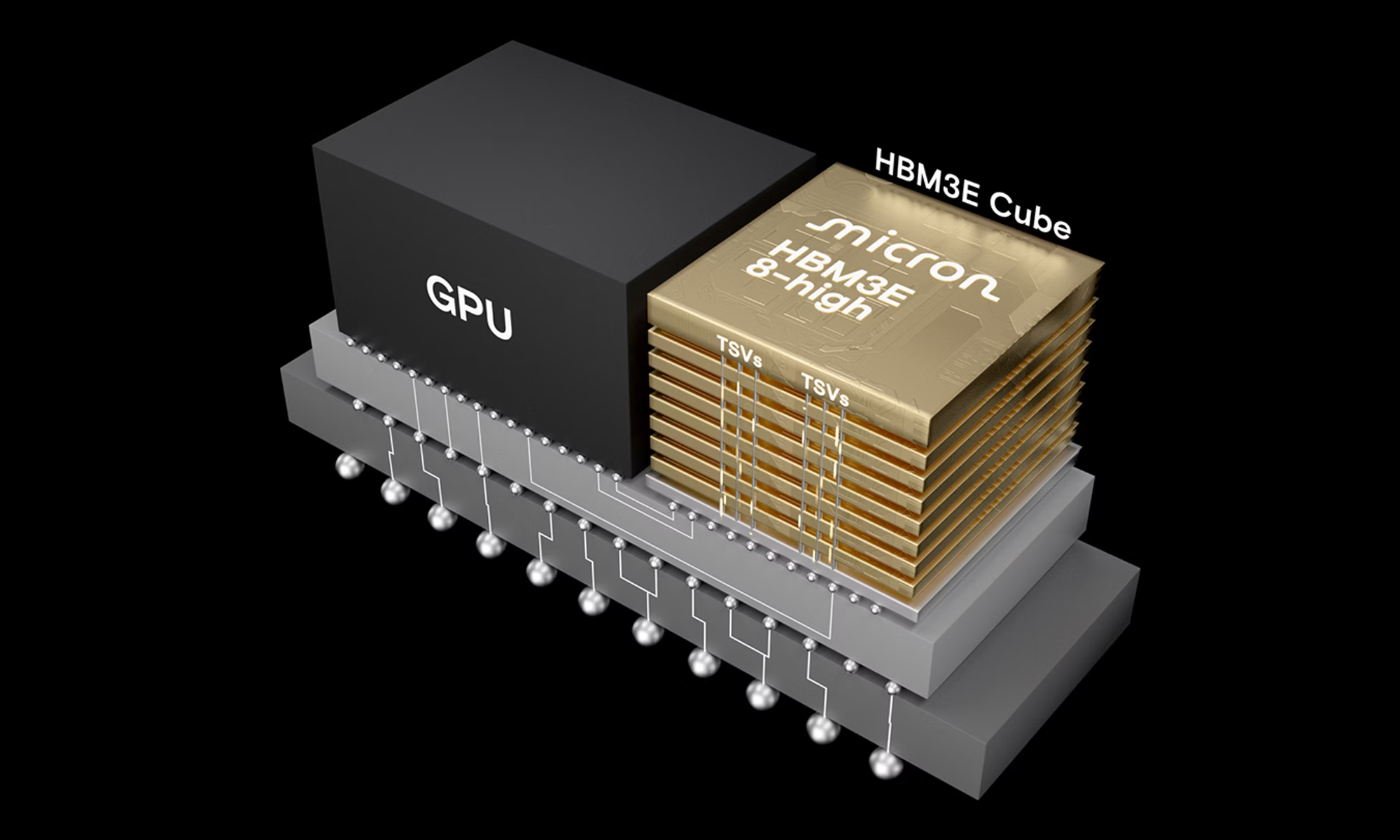

- Memory bandwidth and storage systems become bottlenecks, not afterthoughts

That second point is where most investors are still underweight.

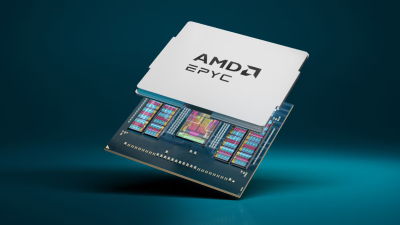

While GPUs dominate headlines, CPUs — particularly from companies like Advanced Micro Devices (NASDAQ:AMD) — are increasingly embedded in AI workloads that require real-time decision trees, tool use, and persistent agent memory.

AMD today looks structurally similar to where Nvidia was four years ago, at the start of the last AI cycle:

- Early but accelerating AI exposure

- Underappreciated software stack improvements

- Growing hyperscaler validation

Surprisingly, the market still prices AMD like a second-tier AI beneficiary, even as its data center CPU and accelerator roadmap expands into workloads beyond pure training. That disconnect matters because agentic AI doesn’t run on GPUs alone. It runs on systems.

Why Micron Looks Cheap in a ‘GPU-Only’ World

At first glance, Micron looks like it should be expensive: There is strong AI-driven demand for high-bandwidth memory (HBM), tight supply conditions exist, in advanced DRAM, and HBM content is rising per AI server. Yet the stock continues to trade at relatively compressed valuation multiples versus AI peers.

Based on recent consensus estimates, Micron has often traded in a forward P/E range of 8x to 12x peak earnings estimates while Nvidia goes for 30x to 40x+ forward earnings. AMD typically trades at 20x to 30x estimates.

What’s going on? The answer is perception — not performance. Micron is still being viewed through the old AI lens of GPUs drive demand, so Nvidia wins, and everything else is secondary.

But that ignores a critical shift happening underneath the surface: memory is becoming a structural bottleneck in AI systems, especially as models become more agentic and memory-intensive.

Here’s what the numbers tell us:

- HBM content per AI GPU has increased multiple times since 2023

- DRAM pricing cycles are stabilizing faster due to AI demand floors

- Data center memory demand is growing faster than consumer memory

That combination changes Micron from a cyclical memory supplier into a critical AI infrastructure enabler.

The Market Is Still Pricing Yesterday’s AI

The core disconnect is simple. Investors are valuing AI as a GPU cycle led by Nvidia, while memory as a cyclical commodity led by Micron. But the emerging reality looks different:

|

Layer |

Old AI View |

Emerging AI Reality |

|

Compute |

GPUs dominate |

GPUs + CPUs + orchestration |

|

Memory |

Commodity cycle |

Structural bottleneck |

|

System design |

Model training focus |

Agentic workflows |

If agentic AI continues scaling — and enterprise adoption trends suggest it will — then memory intensity per workload rises, not falls. That is structurally bullish for Micron.

Key Takeaway

In short, Micron’s low valuation multiple isn’t about weak fundamentals. It’s about the market still anchoring AI to the GPU-first era defined by Nvidia.

But AI is no longer just about training and inference. It’s moving toward agentic systems where memory, bandwidth, and system-level coordination matter just as much as raw compute. That shift doesn’t dethrone Nvidia, but it does broaden the winners. And that’s where Micron enters the conversation differently.

If AI stays GPU-centric, Micron remains cyclical and cheap, but if AI becomes system-centric, Micron becomes structurally underpriced. That is the real tension investors are missing.

When all is said and done, Micron isn’t trading at a discount because growth is weak. It’s trading at a discount because the market is still telling yesterday’s AI story — not tomorrow’s.