Editor’s Note: a prior version of this story included a shortened section of a quote from Jim Cramer. This has been updated to include a full quote, and additional context.

“David Faber: “Andy Jassy made a very significant decision to move forward with the Trainium chip a number of years ago, right? Instead of just saying, I want to buy all the NVIDIA I possibly can. But was that a good decision?”

Jim Cramer: “I have gone around the block with him on that, and he has been able to prove to me that it is a reasonable decision because of the cost of NVIDIA.“

That’s Jim Cramer on Mad Money, commenting that Amazon (NASDAQ:AMZN | AMZN Price Prediction) CEO Andy Jassy keeps betting on custom silicon instead of doubling down on Nvidia (NASDAQ:NVDA). I’ve been watching pretty much every report on this debate for a couple of years now, and here are my thoughts:

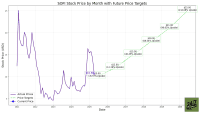

The Numbers Backing Jassy’s Decision

Jassy’s cost argument is backed by real numbers. Trainium2 delivers 30 to 40% better price performance than comparable GPUs. Amazon’s combined Graviton and Trainium chips business is now over $10 billion in annual revenue run rate, growing triple-digit percentages year over year. At that scale, the custom silicon bet has already crossed into real commercial territory.

Jassy said on the Q4 2025 earnings call: “The dominant early leaders aren’t in a hurry to make that happen. It’s why we built our own custom silicon in training. And it’s really taken off.”

Trainium3 is already delivering production workloads, with nearly all supply expected to be committed by mid-2026. Trainium4 is on track for 2027 with 6x FP4 compute performance and 4x more memory bandwidth versus Trainium3. At AWS scale, that cost advantage compounds into real margin improvement. AWS operating margin hit 35% in Q4 2025 despite massive infrastructure spend. That’s the custom silicon thesis working in real time.

Cramer’s source, Semianalysis CEO Dylan Patel, argues you need Nvidia for inference, not just training, specifically because of Groq. That’s a fair technical point. But Amazon isn’t abandoning Nvidia either. Nvidia secured a deal to supply 1 million GPUs to AWS through 2026 and 2027. Amazon is running both tracks simultaneously, which is exactly what a rational hyperscaler should do.

But, There Is Another Side

The consumer AI interface story is a different conversation. ChatGPT, Claude, and Gemini have real users who rely on them daily. Alexa has not kept pace. If Cramer’s earlier comments are really about Amazon losing the consumer AI mindshare battle, that’s legitimate. Infrastructure excellence doesn’t automatically translate into a compelling AI product that consumers reach for first.

AWS revenue grew 24% year over year in Q4 2025, its fastest pace in 13 quarters. The enterprise and cloud infrastructure story is strong. The consumer AI story needs work. Those are two separate questions, and conflating them leads to the wrong prescription. Going all-in on Nvidia doesn’t fix Alexa.

If you believe AWS’s custom silicon strategy will continue compressing inference costs and widening margins, Amazon’s forward setup looks compelling. If you believe consumer AI interfaces will define the next wave of value creation, that’s where the gap is real, and Cramer’s underlying concern deserves more credit than his Nvidia framing suggests.