Shares of Micron (Nasdaq: MU) are up 3.3% right before the market opens. Shares of SanDisk (Nasdaq: SNDK) are up slightly more, 4.2%. Both stocks saw heavy sell-offs yesterday. We published an article noting the sell-offs came after a massive sell-off in Korean equities that saw SK Hynix decline 11.5% and Samsung 9.9% the night before.

However, Korean equities once again crashed last night with the KOSPI down 12%. SK Hynix dropped another 9.6% while Samsung plunged 11.7%. Despite those losses, American memory stocks look poised to shake off the broader weakness. This raises the question whether a large part of yesterday’s sell-off could have been something broader. Reports are building that NVIDIA plans to launch a new system at its GTC conference later this month that could bypass high-bandwidth memory. Was yesterday’s sell-off mostly caused by investors getting out of memory names ahead of this event?

Let’s dig in.

NVIDIA Is Preparing A New Chip with Radically Different Architecture

I covered NVIDIA’s new chip in an article yesterday, here are the key details from that article:

Nvidia has long dominated AI training hardware, but inference favors efficiency over raw throughput, which is exactly why competitors have found an opening. Companies like Broadcom have argued that NVIDIA’s GPUs aren’t specialized enough for inference and will soon prove to be too expensive.

Recently, NVIDIA purchased the IP and most the employees from startup Groq.

The specifics of Groq’s technology are fairly technical, but there’s the key idea. Groq has been working on an entirely different architecture than NVIDIA’s GPUs.

In short, it utilizes a compiler that pre-plans operations. So instead of needing to coordinate high-bandwidth memory, Groq’s chips execute a schedule using on-chip SRAM.

The downside to this architecture is that pre-compiling is difficult. You need chips to be perfectly synchonized, which is an incredibly difficult engineering challenge. NVIDIA has presented innovations at recent conferences that strongly hint at the company discovering a solution to synchonize Groq’s chips.

So, the market is putting two and two together. NVIDIA shelled out $20 billion for Groq, and plans to utilize its ‘clock-forwarded die-to-die links’ to commercialize Groq. It’s expected NVIDIA could announce this new chip built for inferencing workloads at GTC, which takes place later his month.

The SRAM Threat: A Structural Problem, Not Just Sentiment

The key section above that could impact memory makers is NVIDIA’s focus on SRAM (static RAM) rather than the high-bandwidth memory (HBM) that currently powers its AI accelerators. To understand why that matters, you need to know what these two types of memory actually do.

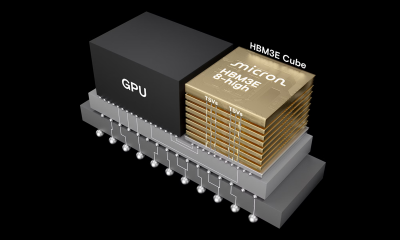

HBM, or high-bandwidth memory, is the expensive, power-hungry, performance-dense memory stacked directly on top of AI chips. It is what lets a GPU move massive amounts of data fast enough to train and run large AI models. Micron is one of the primary suppliers of HBM for NVIDIA’s current generation of chips. SanDisk’s enterprise NAND flash plays a complementary role in AI data center storage and NVIDIA has put more of a focus on NAND with the unveiling of its Bluefield networking platform.

SRAM is a fundamentally different animal. It is faster and lower-latency than HBM, but it is also far more expensive per bit and cannot scale to the same capacities. The reason it is relevant now is inference. Training AI models requires enormous memory bandwidth. NVIDIA’s newest chip doesn’t have to constantly delegate operations to memory, instead it compiles everything upfront using SRAM.

I know that’s technical, but it presents a brand-new architecture that could allow NVIDIA to blunt competitive threats from companies like Broadcom (Nasdaq: AVGO) while also selling a high volume of chips that no longer rely on HBM or NAND, the markets that have led to such incredible gains for SanDisk and Micron recently.

This is not a confirmed product. But in semiconductor markets, the threat of a design shift is often enough to reprice stocks before the reality arrives.

Blip or Re-Rating?

Don’t expect NVIDIA’s new product (if announced at GTC) to have any massive impacts on near-term forecats for SanDisk and Micron.

Micron’s guidance points to $18.7 billion in revenue next quarter. I am very confident they’ll beat that. Likewise, SanDisk will put up historic EPS growth this year.

Yet, the broader concern is whether NVIDIA’s new chips could reverse the narrative on a long-time memory supercycle that could last through the end of the decade. If they do that, it could cause a re-rating for both stocks that would be very painful after their recent run-ups.