Nvidia’s Unstoppable Momentum in the AI Era

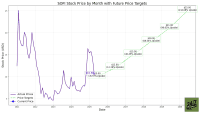

Nvidia (NASDAQ:NVDA | NVDA Price Prediction) has been on a tear, transforming from a gaming graphics powerhouse into the undisputed king of artificial intelligence hardware. Its revenue soared 56% year-over-year in the latest quarter, fueled by insatiable demand for its GPUs that power everything from ChatGPT to autonomous vehicles.

The secret sauce? Nvidia’s ability to deliver cutting-edge chips at scale, capturing nearly 90% of the AI accelerator market and minting billionaires along the way.

Looking ahead, Nvidia’s Blackwell platform — its latest generation of AI accelerators — promises to sustain this blistering growth for years. Unveiled earlier this year, Blackwell chips boast unprecedented performance, handling massive AI training workloads with up to 30 times the efficiency of predecessors. Early adopters like Meta Platforms (NASDAQ:META) and Microsoft (NASDAQ:MSFT) are already snapping them up, and production ramps are expected to flood the market by late 2025.

Even as Nvidia invests heavily in its Rubin architecture — the next leap in AI silicon set for 2026 — Blackwell ensures a seamless growth trajectory. Analysts project Nvidia’s data center revenue could double again by 2027, solidifying its moat against rivals.

This isn’t just hype; it’s a testament to Nvidia’s engineering prowess and ecosystem lock-in, with software like CUDA keeping developers hooked. In an AI arms race heating up globally, Nvidia’s position looks ironclad, poised to ride the wave of trillion-dollar investments in compute power.

A Bold Move on OpenAI’s Custom Needs

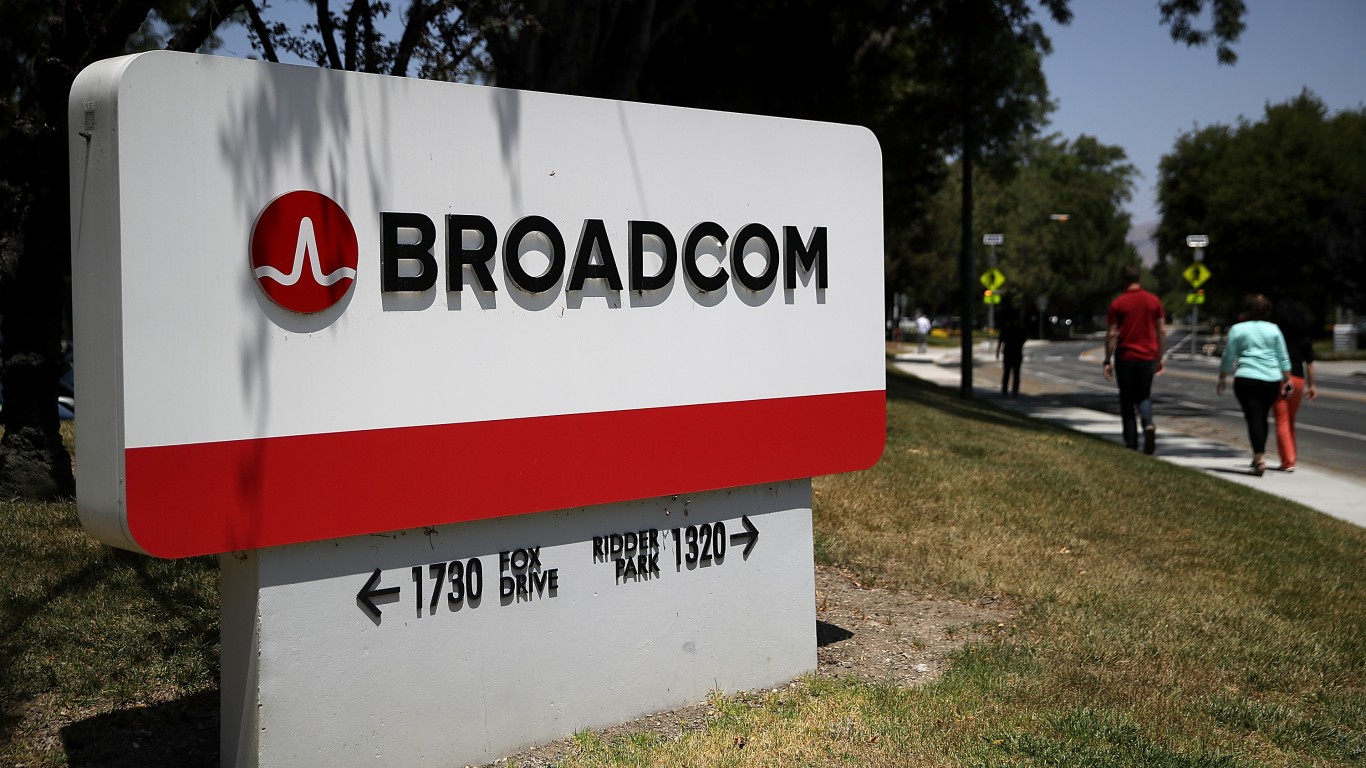

In a twist that could have a significant long-term impact, Broadcom (NASDAQ:AVGO) appears to have lured away one of Nvidia’s crown-jewel customers: OpenAI. Reports suggest OpenAI is collaborating with Broadcom on a groundbreaking $10 billion order for custom AI accelerators, marking a seismic shift in the hyperscale AI landscape.

This deal, disclosed in Broadcom’s recent earnings (though the customer was unnamed), involves hyperspecific chips tailored to OpenAI’s voracious needs for training models like GPT-5 and beyond.

OpenAI, the creator of ChatGPT, has long relied on Nvidia’s off-the-shelf GPUs for its compute-intensive operations. But as AI models balloon in size and complexity, the allure of bespoke silicon — optimized for energy efficiency and cost savings — has grown irresistible.

Broadcom’s expertise in application-specific integrated circuits (ASICs) positions it perfectly to deliver these tailored solutions, potentially slashing power consumption by up to 50% compared to general-purpose GPUs. This partnership isn’t starting from scratch; whispers suggest initial prototypes are already in testing, with full deployment eyed for 2026.

Implications for the AI Chip Titans

For Broadcom, this $10 billion windfall is a game-changer, accelerating its pivot toward AI dominance. Already a leader in networking and custom chips for giants like Google and Meta, Broadcom’s AI revenue now accounts for nearly half its semiconductor sales, up from a fraction just two years ago.

CEO Hock Tan has touted the company’s “growing share” in this niche, and this OpenAI coup could balloon its backlog to $50 billion or more. It signals Broadcom’s maturation as a full-stack AI player, blending hardware design with software integration to challenge the status quo.

Nvidia, however, faces a more nuanced challenge. While OpenAI represents a massive chunk of its customer base — contributing indirectly through partners like Microsoft — losing even partial orders underscores diversification risks. Nvidia’s top four customers drove 46% of revenue in the second quarter, making it vulnerable to shifts toward custom alternatives.

That said, Nvidia’s ecosystem remains unmatched, with Blackwell’s versatility ensuring broad appeal. The real sting, though, is this could embolden other Nvidia clients — from Anthropic to xAI — to explore Broadcom’s offerings for specialized workloads, eroding Nvidia’s monopoly in high-end AI training.

A Ripple Effect in the AI Supply Chain

The OpenAI-Broadcom alliance might just be the spark that ignites a broader exodus. As AI inference demands explode — think real-time chatbots and edge computing — custom chips offer a compelling edge over Nvidia’s one-size-fits-all approach.

We’ve seen Meta design its MTIA chips with Broadcom, and Alphabet’s TPUs — also Broadcom-powered — boast efficiency gains of up to 33 times. If OpenAI’s success story spreads, expect more hyperscalers to hedge their bets, pressuring Nvidia to innovate faster while boosting Broadcom’s order pipeline. This competition could lower costs industry-wide, benefiting end-users but squeezing margins for pure-play GPU makers.

Key Takeaway

OpenAI’s pivot to Broadcom won’t shatter Nvidia — its Blackwell arsenal and software fortress ensure continued dominance, with revenue growth likely to hum along at accelerated rates annually. But it spotlights Broadcom as a rising force in AI, leveraging custom designs to carve out a lucrative niche.

While Nvidia’s scale dwarfs Broadcom’s, the latter’s diversified portfolio — from wireless to data centers — offers steadier growth with less volatility. For investors eyeing AI exposure, Broadcom might just be the sharper pick, blending explosive upside with a more balanced risk profile in this red-hot sector.