NVIDIA (NASDAQ:NVDA | NVDA Price Prediction) CEO Jensen Huang recently appeared on the Dwarkesh Podcast and made one of his most direct competitive claims yet: “NVIDIA’s computing stack is the best performance per TCO in the world, bar none. Nobody can demonstrate to me that any single platform in the world today has better performance TCO ratio. Not one company.”

TCO, or total cost of ownership, refers to the full cost of running a system over time, including hardware, power, cooling, and software (not just the sticker price of a chip). Huang argues that when accounting for all costs, no competing platform delivers more AI output per dollar spent.

What Huang Actually Claimed

Huang went beyond a general claim. He argued NVIDIA leads on performance per dollar for token generation and performance per watt, and challenged competitors directly, saying TPUs and Trainium will not show up to compete at benchmarks like MLPerf Inference Max. NVIDIA’s own filings support this confidence. In the latest MLPerf inference results, GB200 NVL72 delivered up to 30x higher inference throughput compared to an 8-GPU H200 submission on the Llama 3.1 benchmark. Huang has also called Grace Blackwell with NVLink “the king of inference today, delivering an order-of-magnitude lower cost per token.”

The CUDA Argument, Reframed

Huang pushed back on the idea that NVIDIA’s edge is simply developer lock-in through CUDA. He argued the margins come from genuine performance advantages, with NVIDIA engineers embedding deeply with AI labs and regularly delivering 2x or 3x performance improvements on existing infrastructure, which translates directly to customer revenue.

NVIDIA’s CFO noted that software optimizations improved Blackwell’s performance by 1.5x in a single month, and Hopper’s inference performance improved four times over two years through the CUDA ecosystem.

The Pushback Investors Should Not Ignore

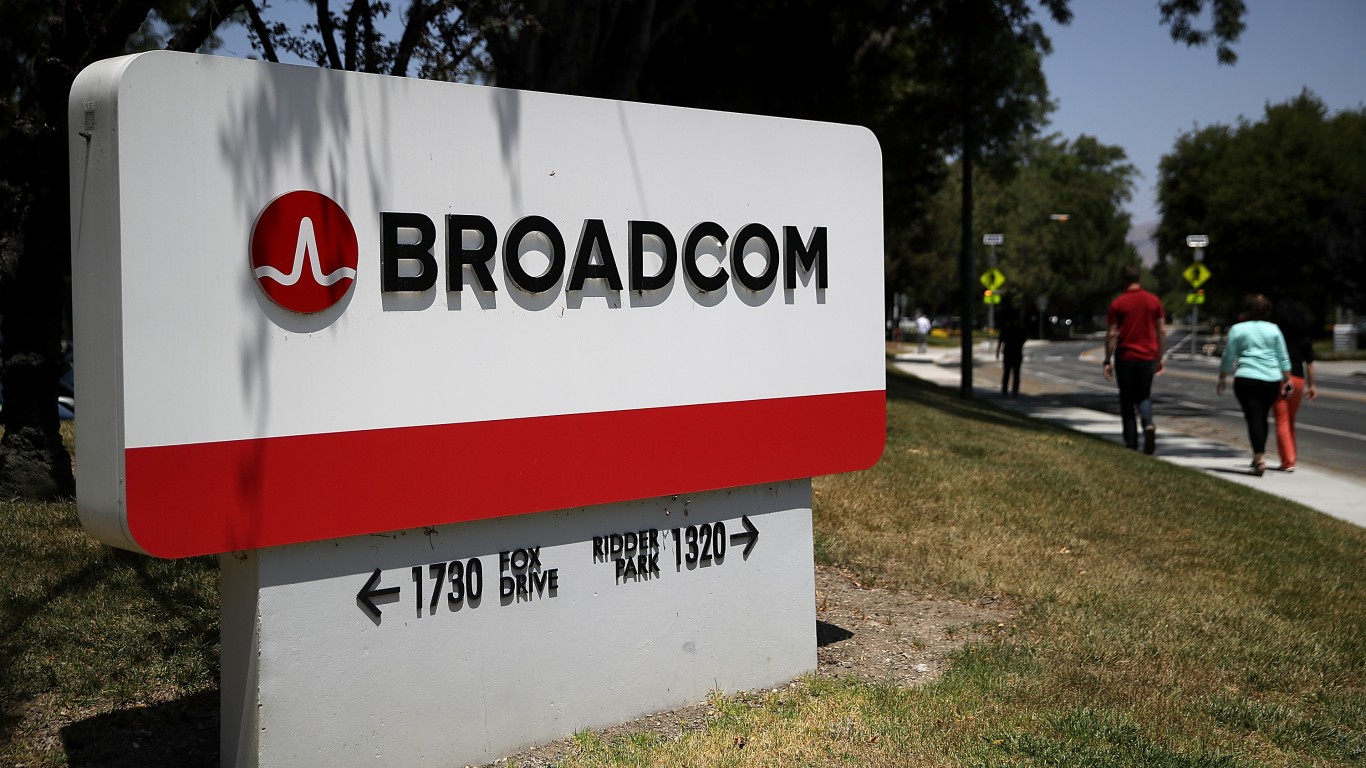

The podcast host raised a real counterpoint. Anthropic announced a multi-gigawatt deal with Broadcom and Google for TPUs. When hyperscalers can afford to build custom silicon, does CUDA matter? Huang called the premise wrong, but the deal is observable and not hypothetical.

Broadcom (NASDAQ:AVGO) reported AI chip revenue of $8.4 billion in Q1 FY2026, up 106% year over year, driven by custom accelerators for hyperscalers. CEO Hock Tan set a goal to exceed $100 billion in AI sales by 2027. Meanwhile, Alphabet (NASDAQ:GOOGL) guided for $175 to $185 billion in 2026 capital expenditures, a significant portion funding TPU development and AI infrastructure that competes directly with NVIDIA’s stack.

For investors: Huang’s TCO claims are backed by benchmark data and accelerating financials, with NVIDIA posting $68.1 billion in Q4 FY2026 revenue, up 73%, and guiding for approximately $78 billion in Q1 FY2027. But custom silicon build-out at Google and Broadcom represents real capital deployed at scale. The debate between general-purpose GPU dominance and purpose-built accelerators remains unsettled, and that tension is worth watching as hyperscaler CapEx commitments translate into actual silicon deployments over the next two years.