It feels like shares of Nvidia (NASDAQ:NVDA | NVDA Price Prediction) are on pause, just waiting for AI demand to fade before investors can start throwing in the towel en masse. Of course, the company’s sky-high margins can’t be sustainable for the long term, right? In a prior piece, I compared Nvidia’s near-75% gross margins to those of a software company. While Nvidia might be a GPU seller, it’s not the chips that are the firm’s secret weapon.

Rather, it’s the Nvidia ecosystem and all the nice things alongside the chips that could keep customers around and upgrading for years to come. As the company continues to innovate across the stack, it might prove difficult to get out of the Nvidia ecosystem, even as competing products become cheaper or customized silicon solutions look to offer even better efficiency gains for the age of AI inference.

The big Groq deal couldn’t have come at a better time

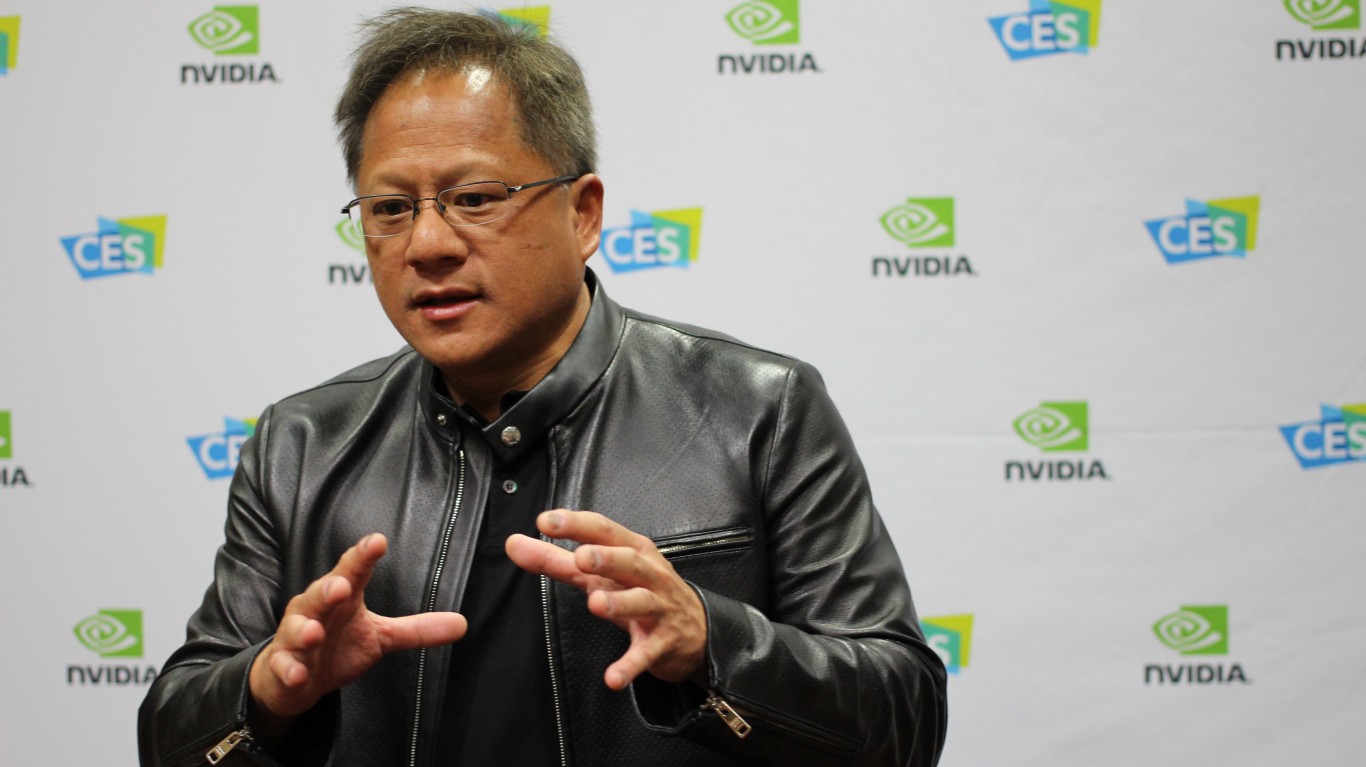

When Nvidia inked a major deal with Groq at the end of 2025, it felt like Jensen Huang and company were ready to win in the inference age.

Of course, time will tell if Nvidia’s ability to lock in (not just the leading chip benchmarks but all else that’s included alongside the ecosystem) can help it stay a profitability engine for a while longer, as AI demand stays off the charts. While Nvidia is starting to look cheap again, it’s only cheap if its margins aren’t going to face significant compression at some point over the next few years. In my view, AI demand is not just alive and well, but it could take a bit of a turn for the better.

There will be winners that aren’t named Nvidia as the semiconductor scene gets a bit more crowded, but in the meantime, I think the “value” case for holding onto shares still makes sense, even if the momentum is no longer behind the stock.

As for Groq, its language processing units (LPU) may very well help Nvidia gear up for an agentic-driven inflection point of sorts. As Nvidia goes heavy after the “AI factory” game plan, I do think that Jensen Huang beat out rivals with a major silicon player that could help further enhance the experience within Nvidia’s ecosystem.

CUDA: Nvidia’s edge in the AI hardware wars

You’ve probably heard that CUDA, the software layer, has acted like an economic moat for Nvidia. In many ways, it’s true that CUDA is one of the critical moat components that could help keep Jensen Huang’s empire head and shoulders above the competition. As the company continues adding more to its software stack, though, I think there’s a chance that CUDA could leave the competition further behind.

With CUDA-X Agentic Libraries and new offerings such as NemoClaw, it’s getting harder to switch from Nvidia. Arguably, there may be no reason to, especially since the firm is already adding tools that feel years ahead. For firms looking to experiment with quantum (think the CUDA-Q model and toolchain) or Omniverse, Nvidia seems like the place to be, and until that changes, I see Nvidia as having what it takes to preserve its margins, even as rivals become more capable while following more of an Nvidia-like game plan to take control of the entire stack.

Perhaps the biggest risk, I think, is that the hyperscalers are willing to roll up their sleeves and pay the price to take control of their own AI stacks from the chips all the way to the software and everything in between. It’s hard to tell how things play out, but for firms not willing to reinvent the wheel, perhaps Nvidia is worth sticking with as it becomes an agentic AI and quantum AI enabler, so to speak.